I spend most of my time at Evergrowth working alongside the revops, sales enablement, and GTM leaders at enterprise sales teams. One pattern comes up in almost every conversation — not as something they're experiencing now, but as something they've lived through before, or watched peers struggle with. It's the gap between picking a sales methodology and getting it to actually stick.

The pattern went like this. An enablement team picked a methodology — MEDDIC, MEDDPICC, SPICED, BANT — ran a workshop, built Salesforce fields for each element, and waited for the win rate to move. Six months in, the dashboard showed maybe 25–30% of progressed deals had the methodology fully captured. The framework wasn't wrong. The training was solid. Managers held coaching sessions. Reps still defaulted to whatever they were doing before. This is a well-documented industry pattern — and it's the gap our customers came to us to close.

The problem was never the methodology itself. It was the seam between the methodology and the live moment — the call, the email, the follow-up where reps either remembered to ask the Pain probe or didn't.

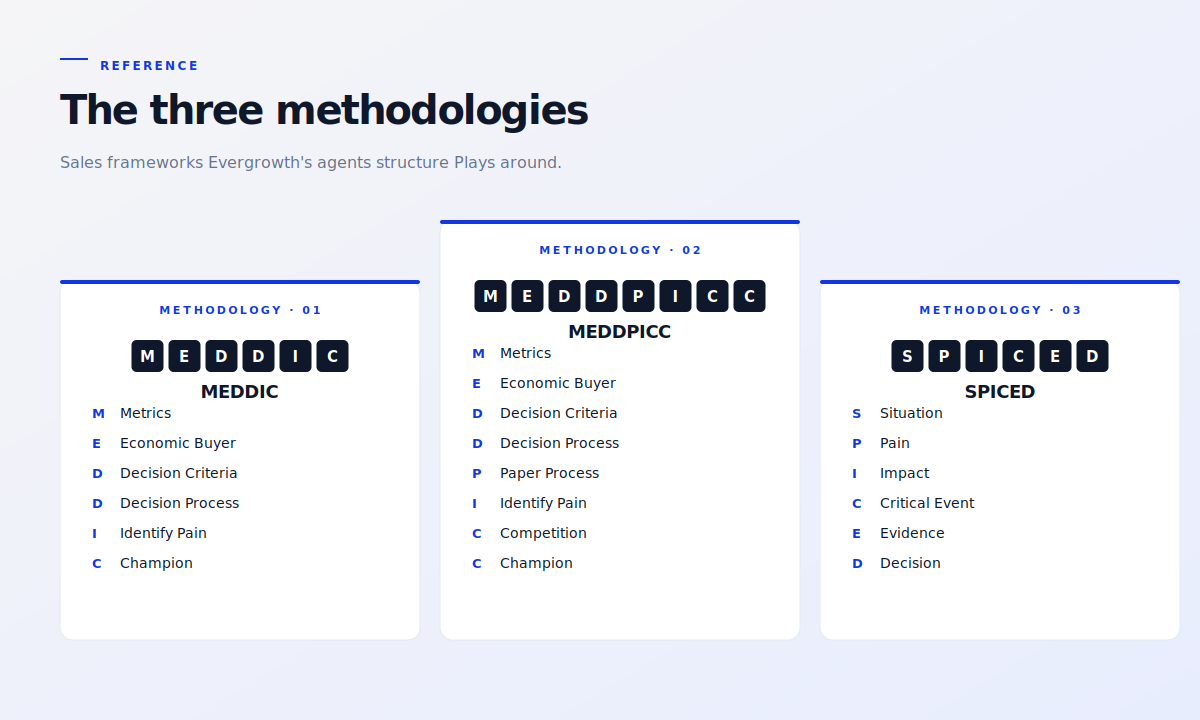

A note on framing before we go further: the three methodologies that come up most often in the rooms I'm in are MEDDIC, MEDDPICC, and SPICED. So I'll use those as the running examples through this piece. The Evergrowth platform isn't tied to any single framework — customers run BANT, ChampPICK, Sandler, MEDDICC, and frameworks they've built themselves. The patterns below apply equally to any of them.

Methodologies don't fail at logic. They fail at adoption.

Every major qualification methodology — MEDDIC, MEDDPICC, SPICED, ChampPICK — encodes the same underlying insight: deals close when you understand the buyer's pain, the impact of fixing it, the decision process, and the champion's incentive to push internally. The frameworks differ in vocabulary and ordering. The claim is consistent.

The data tends to back it up. In one Nordic sales org's own Gong dataset across 674 sales calls, capturing Pain, Impact, or Decision Process individually each lifted blended win rate by +20 to +21 percentage points. The math is real. The question is whether reps consistently apply it across thousands of touchpoints.

Manager-coached methodology rollouts across multi-country sales teams typically take six months or more to reach steady-state adoption. Behavioural drift is the single biggest threat to ROI — reps who already have full pipelines don't have spare cycles to translate a workshop into mid-call muscle memory.

The pattern most enablement teams recognise from earlier rollouts is the same three things:

Reps fill in Salesforce fields after the call, retrofitting whatever they can remember.

Managers sample one or two calls per rep per week — coaching becomes anecdotal, not systematic.

Methodology adoption decays as new hires onboard with looser standards than the original cohort.

The fix isn't another workshop. It's making the methodology default — embedded directly in the artefacts reps already open before every call, meeting, and follow-up.

Where the methodology needs to land: the artefacts reps open

When I sit down with a revops or enablement leader and we trace what a rep actually has in front of them in the live moment, the same four artefacts come up every time:

- The account plan they open before a strategic-account meeting

- The cold-call track they read before dialling

- The meeting agenda they walk into a discovery on

- The follow-up email they send within an hour of the call

If the methodology is embedded in these artefacts, the rep doesn't need to remember the framework. They execute the script and the framework lands by default. This is where GTM agents replace the behaviour-change dependency that every methodology rollout secretly depends on.

Embedding methodology into account plans

Account plans are the most natural place to bake in deal-qualification methodology. An account plan is already supposed to answer "where do we play, why, and how do we win?" — which maps directly onto the Use Case Appetite plus MEDDIC / MEDDPICC framework AEs reference for strategic accounts.

What changes when GTM agents own the work is the input-to-output flow. Instead of an AE manually researching the account, filling in a template, and trying to remember every Metric / Economic Buyer / Decision Criteria field, agents handle three layers of work.

Three pillars of agentic research, then synthesis

Three flavours of research feed every plan: competitor intel (what's in the customer's stack, where the switching cues are), growth signals (financials, hiring, leadership moves, funding events), and stakeholder mapping (buyers, champions, and decision-makers across the org).

What lands in front of the rep isn't a raw research dump. The engine synthesises rather than aggregates: where multiple agents corroborate a signal, confidence goes up. Where signals contradict, the engine flags it for review. The rep gets a weighted, ranked judgment — not a list of findings to interpret themselves.

Three rep-ready sections, each answering a question

The output is structured around the three questions a rep is actually asking when they open the account:

- Business Summary — "Who am I dealing with?" Company snapshot, competitor analysis, technical and IT architecture, recent context (financials, leadership moves, growth events).

- Use Case Appetite — "Where do we play, and why?" Sub-scenarios ranked by fit, confidence per scenario, reasoning per ranking, white-space view of departments and regions not yet using the product.

- MEDDPICC Strategy — "How do we win it?" Quantified metrics, named economic buyer, decision criteria and process, paper process, implicated pain (today's symptom plus downstream cost), and competition (who else is in the stack and where the gaps are).

A real example: a Fortune 100 agribusiness's Accounts Receivable plan

Take a strategic account being evaluated for an AR automation use case — say, a Fortune 100 agribusiness running a large-scale credit-to-cash operation. The MEDDPICC section of the account plan doesn't list headers and leave the rep to fill them in. It names the likely economic buyer (CFO or Group Treasurer, owning working-capital performance and DSO targets), suggests champion candidates with rationale ("the Finance Digitalisation and Automation Lead in the Credit to Cash function — directly owns AR automation outcomes; strong candidate to champion AR process mining"), and articulates implicated pain in terms the rep can use on the live call:

"Manual collections and dispersed AR staffing, even after their existing AR automation deployment, imply persistent high-touch invoices that raise cost-to-collect and slow cash conversion; if left unaddressed, this limits the impact of automation investments and constrains working-capital release from AR."

The rep doesn't open the account plan and start qualifying. They open it and start executing. Strategy "current state" and "suggested next steps" are calculated for them, not by them.

Embedding methodology into Plays

Account plans cover the strategic layer. Plays cover the tactical layer — the cold-call track, the meeting agenda, the email sequence, the follow-up. This is where, before agentic workflows, SPICED, MEDDIC, and methodology discipline most often broke down, because reps were working in the live moment with no time to consult a framework.

The fix is to bake the methodology directly into the script. Here's what that looks like for a SPICED-structured cold-call Play — illustrative, drafted by a Play agent for a regional contractor account:

| SPICED | What the rep actually says on the call |

|---|---|

| Situation | "Hi [name], I saw you've got crews running out of the [region] depot and you're hiring three more service techs. Sounds like volume is growing fast. Have I caught you between jobs?" |

| Pain | "The teams I usually talk to your size tell me scheduling those extra crews and tracking what they're billing back is the part that doesn't scale. Does that sound about right for you?" |

| Impact | "For contractors who got that right, it usually means an extra job or two a week per crew without adding admin headcount. Is that the kind of outcome you'd want from a tool like this?" |

| Compelling event | "With the new depot opening Q1, is fixing this before you scale up, or do you plan to figure it out on the fly?" |

| Decision process | "If this turned out to be the right fit, who else would weigh in on a decision like this — finance, IT, the owner?" |

Capture happens by reading the script, not by remembering the framework. The rep doesn't need to mentally tag each question with a SPICED letter. The structure is invisible to the rep but visible in the resulting Gong transcript and Salesforce capture rate.

The same logic extends across artefact types:

- Meeting prep agendas include persona-shaped discovery questions with implicit MEDDIC framing, alongside ranked objections and decision-navigation script

- Email sequences lead with Pain hypotheses anchored in researched signals, not generic value props

- Follow-up emails reference what the prospect actually said on the prior call — pulled from conversation intelligence transcripts, not the rep's memory

- Voicemail scripts and DM drafts carry the same structural backbone so capture doesn't depend on whether the rep got through

Closing the loop with Gong — methodology that stays live

The risk with any account plan or Play — and a pattern revops leaders recognise from earlier rollouts — is that it becomes a one-time artefact: generated once, never revisited, increasingly stale as the relationship evolves. That's where conversation intelligence integration matters.

Gong (or any conversation intelligence platform) captures what was actually said on the call. When that transcript flows back into the GTM workspace, agents can do three things automatically:

Update the account plan with newly captured SPICED elements — the specific Pain the prospect named, the Decision Process they hinted at, the Champion they identified by name

Draft the next Play with prior-conversation context already woven in — the follow-up email references the specific concern the prospect raised, not a generic "great chatting with you today"

Re-rank the use case appetite if new signals emerged that shift the fit — for instance, a buyer mentioning that an internal initiative just got deprioritised

In one Nordic sales org's deployment, this loop turned Gong from a coaching artefact (transcripts managers occasionally review) into a feedback engine (transcripts that feed the next dial). The methodology stays current because the agents update it after every interaction, not because a manager opens a QBR doc once a quarter.

This is also the part that gets a second compounding return on existing investments. The Gong subscription, the methodology rollout, and the account research stack are usually all bought separately and run in parallel. Plays plus a closed loop turn three parallel investments into one compounded workflow.

Buyer committee coverage without the SalesNav grind

MEDDIC, MEDDPICC, and SPICED all share an obsession with mapping the buying committee — Economic Buyer, Champion, Decision Process participants, technical and business validators. The methodology says: don't go single-threaded on a deal worth six figures.

The reality across the industry is that mapping the committee is slow, manual work. For a single strategic account with six-plus stakeholders, a rep has to open SalesNav, filter by company and seniority, identify likely buyers across finance, ops, IT, and procurement, validate each one's current role, find their email and direct dial, and then draft outreach that doesn't sound like copy-paste. That's an afternoon. Across a hundred-account ABM tier, it's a quarter.

Contact Finder agents collapse this. Given a use case (say, AR automation), the agents:

- Map the buying committee against the persona definitions in the GTM brain — Economic Buyer, Champion, technical validator, procurement gatekeeper

- Find named individuals at the company who fit each persona

- Enrich with verified email and direct dial via a multi-vendor waterfall

- Draft persona-specific outreach copy, anchored in the same researched signals the account plan references

The rep moves from "find and craft" to "review and send." For the MEDDIC requirement that you cover the full committee, the work goes from manual aspiration to default execution.

Where this matters most: enterprise sales motions

Two enterprise customers I've worked with closely illustrate where the pattern compounds hardest.

Aqfer — strategic accounts, 240-day cycles, narrow ICP

Aqfer runs 240-day sales cycles into a deliberately narrow ICP — only 75-100 true target accounts globally, but very high ASP. Each strategic account previously took 4-5 hours of manual research across 5-6 tools just to build a meaningful POV. Their sales team designed 35+ custom signals they wanted tracked per account. With Evergrowth agents, research time per account dropped to 11-12 minutes.

"The breadth of information now is the big win: it allows us to go through accounts faster and say, 'Look, we have an airtight case. We have the right business decision makers. We've got board involvement. Let's move.' If a deal isn't going anywhere, we don't bang our head against the wall; we just move on and keep the pipeline moving."

Tom Burg, Aqfer

The result wasn't just speed — it was the ability to swap accounts quickly when signals went stale. Methodology discipline (the right buyers, board involvement, an airtight case) became something the agents enforced rather than something individual reps had to reach for from memory.

ARIS (Software AG) — signal-driven enablement at global scale

ARIS runs a global enterprise GTM with significant GDPR constraints and a large enablement footprint. Their challenge wasn't a single methodology — it was making signal-driven enablement scale across countries and partners. Custom signals, personas, and Plays now feed agents that run research, prioritisation, and messaging on every account. Account research and planning compressed from hours to seconds. New hires onboard directly onto agentic workflows.

"Seeing reps go into an AI system and start running their own agentic workflows — that's something I wouldn't have imagined two years ago. Now, with agentic AI, the platform does the work for you 24/7. That's the game changer."

Sven Roeleven, SVP Solution Management, Software AG

Both cases share the pattern: methodology and signal discipline that would have required a heroic individual rep to apply consistently is now embedded in the artefacts every rep already opens.

Adoption is the only number that matters

Sales methodologies have always worked on paper. Deals where MEDDIC is fully captured close at meaningfully higher rates than deals where it isn't. Deals where SPICED Pain, Impact, and Decision Process are captured close at meaningfully higher rates than deals where they aren't. The math has never been the problem.

The problem — the one every revops and enablement leader recognises from the industry at large — is that across thousands of touchpoints, methodology adoption decays. Reps default to what's easy. New hires never reach the original cohort's discipline. The win-rate gap between teams with and without methodology adoption isn't a logic gap. It's an execution gap.

GTM agents close the execution gap by removing the dependency on rep memory. When the account plan already names the Economic Buyer, when the cold-call track already embeds the Pain probe, when the follow-up already references what was captured on the last call — the methodology stops being a checklist and starts being the fabric of the work.

That's the difference between a methodology rollout that depends on six months of manager coaching to land, and one that lands the day the Plays go live.

Methodology rollouts fail at adoption, not at logic. The gap is between the training deck and the live call — and it's a behaviour problem, not a framework problem.

Account plans can embed MEDDPICC strategy directly. Named economic buyers, suggested champion candidates, implicated pain — calculated for the rep, not blank fields they have to fill in.

Plays bake SPICED probes into the script reps actually read. Capture happens by reading the cold-call track, not by remembering the framework mid-dial.

Gong closes the loop. Captured signals from each call feed the next Play, so the methodology stays live instead of becoming a static one-time artefact.

The pattern generalises. MEDDIC, MEDDPICC, and SPICED are the most common methodologies among Evergrowth's enterprise customers, but the same logic applies to any framework — BANT, ChampPICK, Sandler, MEDDICC, or bespoke ones customers have built themselves.